NonOpt (Nonlinear/nonconvex/nonsmooth Optimizer) is a software package for minimization. It is designed to locate a minimizer (or at least a stationary point) of

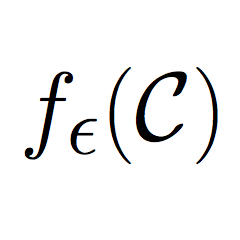

$$\min_{x\in\mathbb{R}^n}\ \ f(x)$$

where \(f : \mathbb{R}^n \to \mathbb{R}\) is locally Lipschitz and continuously differentiable over a full-measure subset of \(\mathbb{R}^n\). The function \(f\) is allowed to be nonconvex.

NonOpt is written in C++. It is available at the NonOpt page on GitHub.

We are looking for test problems! If you have nonlinear/nonsmooth/nonconvex test problems, please let us know. We would be very interested in testing (and tuning) NonOpt with them.

Developer

Citing NonOpt

NonOpt is based on the algorithms described in the following papers.

- [Download PDF] Frank E. Curtis, Daniel P. Robinson, and Baoyu Zhou. A Self-Correcting Variable-Metric Algorithm Framework for Nonsmooth Optimization. IMA Journal of Numerical Analysis, 40(2):1154–1187, 2020. [Bibtex]

@article{CurtRobiZhou20, author = {Frank E. Curtis and Daniel P. Robinson and Baoyu Zhou}, title = {{A Self-Correcting Variable-Metric Algorithm Framework for Nonsmooth Optimization}}, journal = {{IMA Journal of Numerical Analysis}}, volume = {40}, number = {2}, pages = {1154--1187}, year = {2020}, url = {https://academic.oup.com/imajna/article/40/2/1154/5369122?guestAccessKey=b7a4f0fe-8dc4-4bd7-9418-8b1e3335813d}, papercite = {2. Journal Articles} }

- [Download PDF] Frank E. Curtis and Xiaocun Que. A Quasi-Newton Algorithm for Nonconvex, Nonsmooth Optimization with Global Convergence Guarantees. Mathematical Programming Computation, 7(4):399–428, 2015. [Bibtex]

@article{CurtQue15, author = {Frank E. Curtis and Xiaocun Que}, title = {{A Quasi-Newton Algorithm for Nonconvex, Nonsmooth Optimization with Global Convergence Guarantees}}, journal = {{Mathematical Programming Computation}}, volume = {7}, number = {4}, pages = {399--428}, year = {2015}, url = {http://coral.ise.lehigh.edu/frankecurtis/files/papers/CurtQue15.pdf}, papercite = {2. Journal Articles} }

- [Download PDF] Frank E. Curtis and Xiaocun Que. An Adaptive Gradient Sampling Algorithm for Nonsmooth Optimization. Optimization Methods and Software, 28(6):1302–1324, 2013. [Bibtex]

@article{CurtQue13, author = {Frank E. Curtis and Xiaocun Que}, title = {{An Adaptive Gradient Sampling Algorithm for Nonsmooth Optimization}}, journal = {{Optimization Methods and Software}}, volume = {28}, number = {6}, pages = {1302--1324}, year = {2013}, url = {http://coral.ise.lehigh.edu/frankecurtis/files/papers/CurtQue13.pdf}, papercite = {2. Journal Articles} }